Introduction

Every successful Generative AI solution relies on the power of prompts to bridge the gap between human intention and machine creativity. Prompts guide the behavior of Large Language Models (LLMs) and serve as the language through which humans interact with the AI system, instructing it to generate responses.

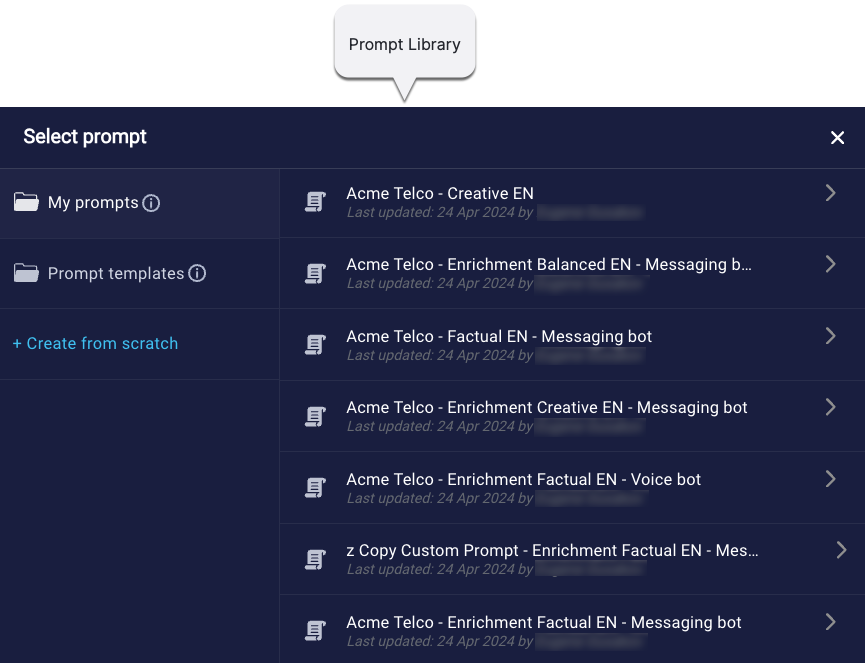

Conversational Cloud’s Prompt Library is the interface you use to select, create, and manage the prompts in your conversational AI solution. It’s designed to accelerate and simplify these processes, so you can unlock the full potential of Generative AI more easily.

Not familiar with LivePerson’s trustworthy Generative AI solution? Get acquainted and get started in our Community Center.

Key benefits

- Customization that aligns with your brand’s identity: Tailor prompts to reflect your brand’s unique voice and tone.

- Efficiency and cost savings: Quickly deploy Generative AI conversational assistance and LLM-powered bots using pre-built prompts, validated by LivePerson’s data science experts, slashing time to value and operational costs.

- An enhanced consumer experience: Use well-crafted, customized prompts for smoother and personalized responses, driving customer satisfaction and greater containment.

- Self-service changes: You’ve got control. Make prompt changes whenever you require.

Exposure points

You can use the Prompt Library to create and manage prompts for Generative AI solutions using:

- Automated conversation summaries to generate summaries of ongoing and historical conversations

- Conversation Assist to offer LLM-enriched answers to agents

- Conversation Builder to automate LLM-enriched answers to consumers

- Conversation Builder to route consumers conversationally